Algorave

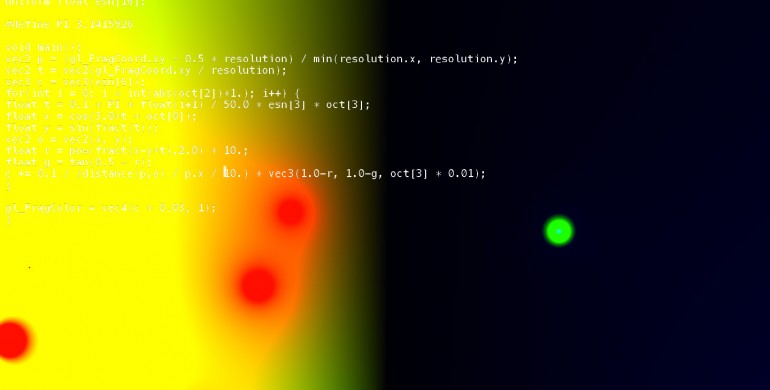

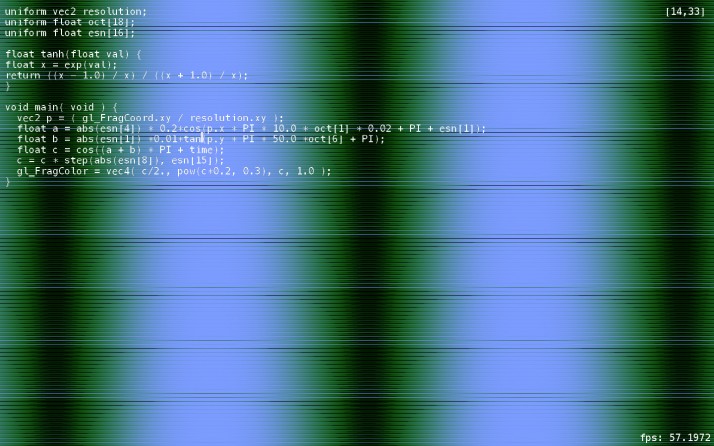

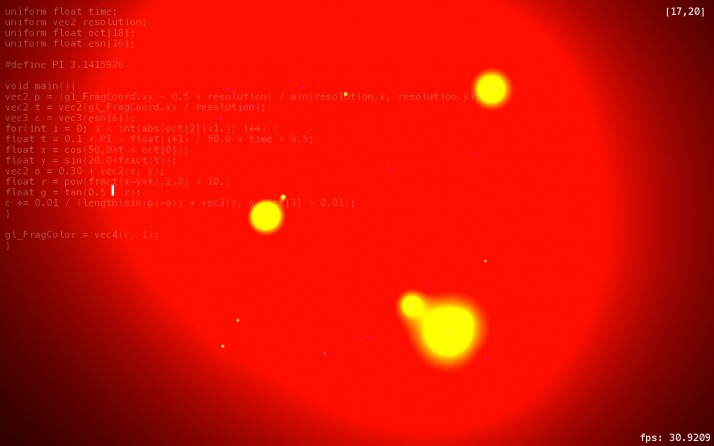

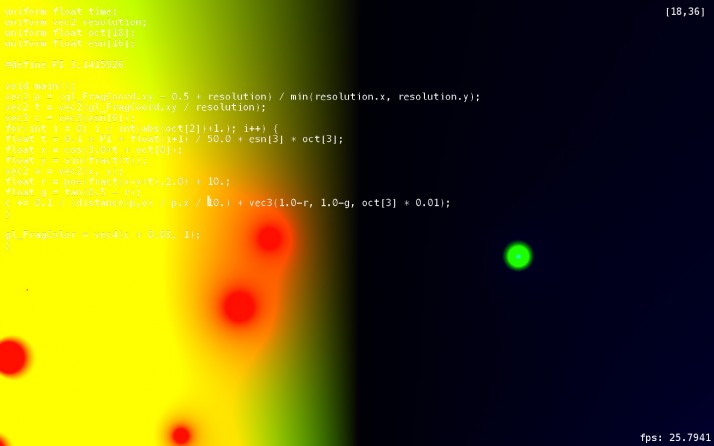

These images were created by Luuma aka Chris Kiefer, during his outstanding live coding set during our Algorave. Chris explains how the visuals were created:

I’ve uploaded a sketch of my live setup here, which explains a bit where the audiovisual system fits in. Here’s a short explanation:

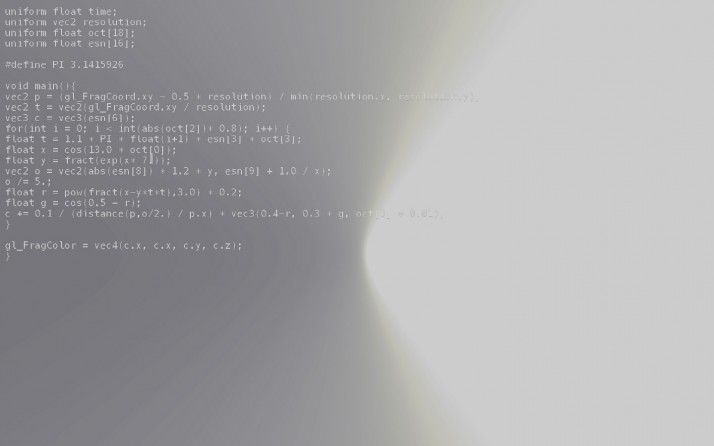

The code creates fragment shaders which run of the graphics processor. The audio system sends these shaders data about frequencies strengths in the sound that’s playing, and energy levels from an Echo State Network that runs at the centre of the system. The shaders use this to create visuals. The visuals are then analysed, and the resulting data influences the audio synthesis system, making a feedback loop between sound and video. The feedback levels are modulated live using a MIDI controller.